...making Linux just a little more fun!

Mailbag

This month's answers created by:

[ Amit Kumar Saha, Ben Okopnik, Kapil Hari Paranjape, Karl-Heinz Herrmann, René Pfeiffer, Rick Moen, Robos, Thomas Adam ]

...and you, our readers!

Still Searching

adesklets

J. Bakshi [j.bakshi at unlimitedmail.org]

Wed, 18 Mar 2009 22:15:55 +0530

Dear all,

I have already moved into icewm, idesk, claws-mail, aterm, VLC,

audacious etc... with some other light application to realize speed in

my linux box.

CPU -- AMD Duron 1.5 GHz

RAM -- 128 MB

Swap -- 512 MB

Video Shared memory -- 8 MB

I'm happy to see the quick response time of my system ( comparably fast

than kde ). I also like to add some nice look to my desktop with

calendar, clock, volume control etc... Hence I am thinking to use

adesklets as it is fast due to imlib2. I know some

of you are using adesklets. What is your experience about it ? Is it a

right choice to use it with a system which I have ?

Please share your thoughts. Kindly CC to me.

Thanks

Doubt...

Deepti R [deepti.rajappan at gmail.com]

Tue, 24 Mar 2009 09:04:17 +0530

Hello,

I have read the article of yours from

https://linuxgazette.net/136/anonymous.html. I am trying to write a small

driver program, i have written it, but little bit of redesigning is

needed. So it would be great anyone of you can help me.. i am posting my

question below ..

I wrote a simple keyboard driver program, which detects the control k

(CTRL + K) sequence [I have written the code to manipulate only ctrl + k].

I also have a simple application program which does a normal

multiplication function.

I need to invoke that application program from my driver when I press ctrl

+ k in my keyboard [once I press the ctrl + k, driver will send a SIGUSR1

signal to my application program. That will accept the signal and perform

multiplication]. I could do that if I hardcode the pid of application

progam [pid of a.out fille] in driver. Ie, inside the function

kill_proc(5385, SIGUSR1, 0), the first parameter is the pid of application

program.

But this is not a correct method, every time I need to compile the

application program open the driver add the pid to it, compile it using

Makefile then insert the .ko file, it doesn't look good. I tried using -1

as the first parameter for kill_proc () [to send the SIGNAL to all the

process that are listening], since my application program is also

listening to the SIGNAL, ideally it should catch the SIGUSR1 signal and

execute It, but it's not working* 1/4.

Can you suggest any method to send the SIGUSR1 signal from my driver

[which is in kernal spcae] to application program [which is in userspace]?

Having an entry of pid to /proc also i can achieve this, but that is not a

good design.  , Can it be implemented through ioctl?

, Can it be implemented through ioctl?

I am pasting my codes below...

[ ... ]

[ Thread continues here (1 message/7.20kB) ]

Trying DTrace on Linux

Amit k. Saha [amitsaha.in at gmail.com]

Thu, 5 Mar 2009 10:47:14 +0530

Hello all,

Linux port of DTrace has been moving for some time now.

I just tried the latest bits from

ftp://crisp.dynalias.com/pub/release/website/dtrace and the initial

impression is we got really cool stuff (in the making here).

Besides, GCC, Kernel headers, you will need the following stuffs to

compile and load the DTrace kernel module:

* libelf-dev: Working with 'elf' files

* zlib libraries: working with the zlib files

* bison, flex

Once you have got them, extract the sources and do:

1. make all

2. sudo make install

3. sudo make load

If you do not see any error message, then the DTrace kernel module

'dtracedrv' has been correctly insrted.dtrace -l should display a long

list of the currently available probes.

Read the rest at https://amitksaha.blogspot.com/2009/03/dtrace-on-linux.html.

(The code doesn't format properly here, hence the link to the blog)

Best,

Amit

--

Amit Kumar Saha

https://amitksaha.blogspot.com

https://amitsaha.in.googlepages.com/

*Bangalore Open Java Users Group*:https:www.bojug.in

Our Mailbag

siggen problem

Ben Okopnik [ben at linuxgazette.net]

Mon, 30 Mar 2009 00:25:41 -0400

[ Again, Arild - please remember to CC the list. ]

On Sun, Mar 29, 2009 at 08:16:45PM -0700, deloresh wrote:

> Ben is this what you mean?

> I am pretty sure I copied every word correctly then sent it to a

> friend's address.Then it got bounced to me in my windows computer.

>

> ----- Original Message ----- From: <2elnav@netbistro.com>

> To: <catluv@telus.net>

> Sent: Sunday, March 29, 2009 8:11 PM

> Subject: siggen problem

> arild@Arildlinux:~$

> arild@Arildlinux:~$ siggen

> siggen: Display signature function values.

> Tripwire(R) 2.3.1.2 built for

> Tripwire 2.3 Portions copyright 2000 Tripwire, Inc. Tripwire is a registered

> trademark of Tripwire, Inc. This software comes with ABSOLUTELY NO WARRANTY;

> for details use --version. This is free software which may be redistributed

> or modified only under certain conditions; see COPYING for details.

> All rights reserved.

> Use --help to get help.

> arild@Arildlinux:~$

Well done, Arild! Yep, exactly what I mean.

What this implies to me is that 1) you have "tripwire" installed (which

you don't need), and 2) that you don't have the "siggen" package (as

contrasted against the "siggen" program) installed. If you had both, the

latter would normally get executed first - because it gets installed in

a "higher priority" directory.

ben@Tyr:~$ apt-file search bin/siggen

siggen: /usr/bin/siggen

tripwire: /usr/sbin/siggen

In the default execution path, "/usr/bin" comes before "/usr/sbin" - so

"/usr/bin/siggen" would get executed first. Here's what you need to do

to fix it:

sudo dpkg -P tripwire

sudo apt-get install siggen

That should take care of it. After you've run the above two commands,

you should be able to type "siggen" at the command line and see the

sound generator application.

--

* Ben Okopnik * Editor-in-Chief, Linux Gazette * https://LinuxGazette.NET *

[ Thread continues here (4 messages/6.88kB) ]

need your suggestion to select linux tools and configure idesk

J.Bakshi [j.bakshi at icmail.net]

Wed, 11 Mar 2009 23:02:27 +0530

Dear list,

I am in a process to optimize my linux box with low fat tools. I have already

icewm running with geany editor, parcellite clipboard, audacious, aterm,

claws-mail. Some more applications are missing and I am seeking your kind

advise here.

1> what might be a little and fast sound mixer applet to use with icewm ?

2> A screen capture tool that can be fitted in icewm taskbar and have the

features like ksnapshot.

I still have problem with idesk. It can't provide the wallpaper though having

the right path of Background file. It alwasy shows error like

~~~~~~~~~~~~~~~~~~~~

[idesk] Background's file not found.

[idesk] Background's source not found.

~~~~~~~~~~~~~~~~~~~~~~

Please suggest,

Thanks

[ Thread continues here (12 messages/16.07kB) ]

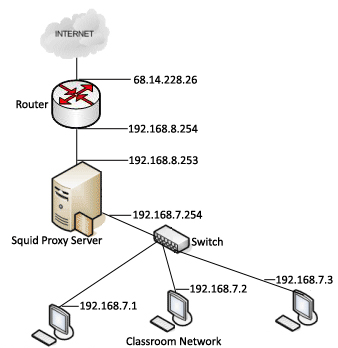

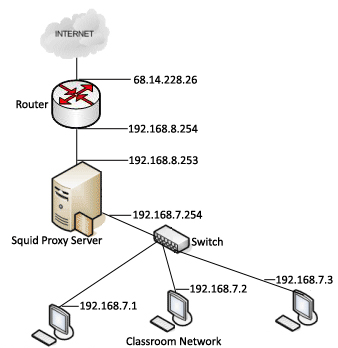

Proxy + firewall configuration on linux

Deividson Okopnik [deivid.okop at gmail.com]

Wed, 4 Mar 2009 11:52:42 -0300

Hello everyone.

Im needing to configure a temporary internet server here, and after

reading a lot, I'm kinda confused :P

I need a non-transparent proxy (asks users for theyr username/pass),

with the ability to block access to certain pages and services (like

www.orkut.com or MSN), plus the ability to generate usage reports.

I saw several programs that can do that, but each article I read uses

a diferent combo - thats what confused me

So, the question is, what software would you use to create such a

configuration?

Thanks for the input

Deividson

[ Thread continues here (6 messages/6.61kB) ]

Slight tweak to your editorial note

Rick Moen [rick at linuxmafia.com]

Tue, 24 Mar 2009 15:51:55 -0700

Almost not worth mentioning, but I've made a substantive albeit

de-minimus addition to your editorial note, consisting of the words

"non-root":

<p class="editorial">[ A common application of this would be to run a Web

or FTP server chrooted in a directory like /home/www or

/home/ftp; this provides an excellent layer of security, since even a

malicious non-root user who manages to crack that server is stuck in a

"filesystem" that contains few or no tools, no useful files other than

the ones already available for viewing or downloading, and no way to get

up "above" the top of that filesystem. This is referred to as a "chroot

jail". -- Ben ]

Take my word for it, without that qualifier, you'd attract quibbles from

people repeating the usual mantra: "chroot(8) is not root safe."

(Ditto the chroot() system call.)

That is, the root user, and thus also any process that can escalate to

UID0 privilege can trivially escape from any chroot jail:

https://kerneltrap.org/Linux/Abusing_chroot

https://unixwiz.net/techtips/chroot-practices.html

https://www.bpfh.net/simes/computing/chroot-break.html

(You'll note that the article lists other ways of indirect ways of

escalating privilege, plus "Why would anyone put that in a chroot

jail?" methods such as "Follow a pre-existing hard link to outside the

jail.")

If you want to be almost safe against kibbitzers writing in to

say "chroot is not a security tool!" (another common mantra), amend your

footnote to say that the tool must be used with care as some known means

exist to attack it, and that it's no substitute for eschewing dangerous

software and configurations. And maybe link to one or more of those

links.

[ Thread continues here (3 messages/6.04kB) ]

how to read /dev/pts/x?

Mulyadi Santosa [mulyadi.santosa at gmail.com]

Fri, 20 Mar 2009 16:49:50 +0700

Hi Gang...

here's the situation:

Suppose I log on into server A twice using same user ID (let's say

johndoe). Technically, Linux in server A will create two /dev/pts for

johndoe, likely /dev/pts/0 and /dev/pts/1

Is there any way that for johndoe in pts/0 to read what the other

johndoe type in pts/1? Possibly in real time? Initially I thought it

could be done by using "history" command (in bash shell), but it

failed.

Thanks in advance.

regards,

Mulyadi.

[ Thread continues here (4 messages/4.06kB) ]

MacroMedia Flash and accessibility

Jim Jackson [jj at franjam.org.uk]

Fri, 13 Mar 2009 20:59:25 +0000 (GMT)

I know this is not strictly "Linux", but I think it's ok...

What's the current thinking about Macromedia Flash and accessibility. I've

done some googling and many accessibility guides I've found are fairly old,

(> 5years).

Reason I'm asking is that an organisation I help out has had someone

volunteer to do them a new website. The initial new homepage is entirely

macromedia flash.

In general I'm severely prejudiced against flash, but I want to give them

considered balanced advice that meets their requirements re.

- accessibility

- ability to do future changes/updates to info on the web pages

any comments/advice welcome

cheers

Jim

[ Thread continues here (4 messages/3.36kB) ]

VMware installation problem

Adegbolagun Adeola [adecisco_associate at yahoo.com]

Sun, 1 Mar 2009 06:07:04 -0800 (PST)

Hello Deividson

Can you help me to fix the problem below?. I actually interrupted vmware

installation before trying to install it only to be requesting for the

below:

I will appreciate you help

root@adey-laptop:/home/adey/Documents/vmware-server-distrib#

./vmware-install.pl

A previous installation of VMware Server has been detected.

The previous installation was made by the tar installer (version 4).

Keeping the tar4 installer database format.

You have a product that conflicts with VMware Server installed.

Continuing

this install will first uninstall this product. Do you wish to continue?

(yes/no) [yes] y

Error: Unable to execute

"/media/DATA_DRIVE/Virtual-Machine-File/vmware-uninstall.pl.

Uninstall failed. Please correct the failure and re run the install.

Execution aborted.

Thanks

[ Thread continues here (3 messages/6.92kB) ]

Google Summer of Code

Jimmy O'Regan [joregan at gmail.com]

Thu, 19 Mar 2009 11:07:21 +0000

Google have announced the list of mentor organisations for this year's

GSoC: https://socghop.appspot.com/program/accepted_orgs/google/gsoc2009

Apertium is on it this year

GUI for idesk ??

Thomas Adam [thomas.adam22 at gmail.com]

Mon, 2 Mar 2009 07:14:42 +0000

2009/3/2 Ben Okopnik <ben@linuxgazette.net>:

> So, if you're willing to wait a couple of days - our next issue comes

> out on the 1st - you'll have access to a very nice GUI for idesk. As far

> as I know, there isn't one available outside of that.

"idconf" or some such name is what I recall of there being an idesk GUI.

-- Thomas Adam

[ Thread continues here (7 messages/9.05kB) ]

Folder Sync on Linux

Deividson Okopnik [deivid.okop at gmail.com]

Mon, 16 Mar 2009 17:31:53 -0300

Hello TAG!

Im doing some PHP coding on my machine, and I have apache running on

another machine. I setup'ed a shared folder, and everytime i want to

test something, I put it on the shared folder, then change to the

other machine (on a KVM switch), and do "sudo cp -r * \var\www" and

"sudo rm -r *" (on the shared folder of course), then switch back to

my machine, and so on.

Question is - is there any simple way of automatizing that? I didnt

want complex systems, I wanted something that detected when there is

any file in the shared folder, then moved it to /var/www.

So, any of you got something that does that?

Thanks for the attention

Deividson

[ Thread continues here (4 messages/2.77kB) ]

Securing a Network - What's the most secure Network/Server OS? - Is ?there a secure way to use Shares?

Rick Moen [rick at linuxmafia.com]

Sun, 1 Mar 2009 10:43:15 -0800

A creative way to deal with "homework" questions.

----- Forwarded message from Wade Richards <wade@wabyn.net> -----

Date: Sun, 01 Mar 2009 09:13:40 -0800

From: Wade Richards <wade@wabyn.net>

To: Chip Panarchy <forumanarchy@gmail.com>

CC: debian-security@lists.debian.org

X-Mailing-List: <debian-security@lists.debian.org> archive/latest/23028

Subject: Re: Securing a Network - What's the most secure Network/Server OS?

- Is there a secure way to use Shares?

This sounds a lot like "I'm taking a course, and I'd like the Internet

to do my homework for me." I'll give you generally correct advice,

with enough lies in here to give you a failing grade if you don't verify

my statements.

If I were setting up a system as you described, I'd focus on what the

network clients are capable of, and what requires the least non-standard

configuration on them (because misconfiguration of the client

workstation is an easy way to introduce insecurity, and it's hard for

you to enforce their config).

The Windows boxes want Windows networking, the Unix-like ones want Unix

networking. A Unix server is most likely to give you both easily,

although almost any server OS can.

So the servers should be running SAMBA for Windows logon and network

shares, plus LDAP and NFS for Unix logon and sharing. SAMBA can be

configured to authenticate against the local LDAP server, so it can

become your single source of knowledge for user accounts. You can share

the same directories on the server via SAMBA and NFS, so they become

your centralized storage.

Encrypting network traffic is very much the least of your concern. So

many people think security means "encrypt stuff!", when it is the high

level protocols (logon, authorization) that matters. Nobody will bother

with packet sniffing when they can just read the files directly from the

file server. Besides, in a wired network, the switches will ensure

packets only go to the machines where they are supposed to be, so

sniffing is pointless. If you really want to waste your time, ipsec, or

tunneling NFS through SSL will work (wireless should use WPA2 with as

many bits as makes you happy.

To make the network fast, you should grease your network cables.

Security can be improve by adding cable locks to all the computers, and

putting in a steel door with a deadbolt, and bars on the windows.

[ ... ]

[ Thread continues here (1 message/6.95kB) ]

How do you format ftp or sftp for transfering files?...

Don Saklad [dsaklad at gnu.org]

Sun, 08 Mar 2009 16:23:26 -0400

How do you format ftp or sftp for transfering files?...

[ Thread continues here (10 messages/14.08kB) ]

[idesk] Background's file not found

J.Bakshi [j.bakshi at icmail.net]

Sun, 8 Mar 2009 15:56:55 +0530

Hello Ben and all,

I am now using icewm with idesk (Version: 0.7.5-4). My combination still

missing the wallpaper. Everytime idesk reports

~~~~~~~~~~~~~~~~~~~~~~~~~

[idesk] Background's file not found.

[idesk] Background's source not found.

~~~~~~~~~~~~~~~~~~~~~~~~~~

Though I have the proper path set there

~~~~~~~~~~~~~~~~~~~~~~~~~~~

Background.Delay: 1

Background.Source: /home/joy/pics/Father

Background.File: /home/joy/pics/Father/love.jpg

Background.Mode: Center

Background.Color: #FFFFFF

~~~~~~~~~~~~~~~~~~~~~~~~

I have even checked those image in kde and the image folder as the source of

slideshow in kde. Everything running well. Don't know why idesk gives the

error. Background color is blue which is provided by icewm !!!

Any clue ?

Thanks

[ Thread continues here (2 messages/3.54kB) ]

how to convert from kmail to slypheed-claws ?

J.Bakshi [j.bakshi at icmail.net]

Sun, 8 Mar 2009 15:59:19 +0530

Dear list,

Is there any way to convert the emails ( maildir form ) and account

information (pop3, smtps) stored in kmail to slypheed-claws ?

Thanks

[ Thread continues here (7 messages/7.95kB) ]

linking my sound card to xoscpe

Arild Jensen [2elnav at netbistro.com]

Fri, 27 Mar 2009 18:11:01 -0700

Ben Okopnik recommended a software program called "xoscope" and gave a

link to a website showing how to build an input circuit or use a sound

card.

I now have the sound card microphone input working and the Xoscope

display up; but the sounds from the mike goes to the speakers, not the

xoscope display. What do I do to link the two?

I'm a newcomer to Linux, so please go easy with the jargon. I installed

UBUNTU only a month ago. I am still learning how to use it.

regards

Arild Jensen (user name elnav)

[ Thread continues here (24 messages/46.16kB) ]

Squid problem (TCP_MISS 504)

Deividson Okopnik [deivid.okop at gmail.com]

Thu, 5 Mar 2009 16:38:38 -0300

Hello everyone.

I just finished installing/configuring squid on a Ubuntu 8.10 server,

and im having the following problem:

Clients time-out when trying to access any webpage - access.log gives me:

179383 192.168.0.1 TCP_MISS/504 2898 GET https://www.google.com/ -

DIRECT/209.85.193.104 text/html

after reading about it, i thought adding no_cache allow localnet to my

squid.conf file would fix the problem, but it doesnt (I already have

an ACL saying localnet = 192.168.0.0/255.255.255.0 and an http_access

allow localnet in the same config file)

Anyone know what might be the problem?

Thanks

Deividosn

[ Thread continues here (5 messages/4.71kB) ]

Cut and paste between two computers. Was linking my sound card to xoscpe

[2elnav at netbistro.com]

Mon, 30 Mar 2009 00:06:41 -0700

[[[ Oh dear...somehow, the quote attribution got lost, but Arild is

responding to a previous comment of Ben's. -- Kat ]]]

> Well done, Arild! Yep, exactly what I mean.

REPLY

This cutting and pasting between various screens on two different computer

is a real PITA.

As you can see from the forwarding I also had to invoke the use of a third

computer on a different address. I have no way to directly link the two

different computer directly. At least none that I know of. And having to

copy down letter by letter what I see on one machine to get it into the

other machine is error prone. Is there a quick way to network a Windows

and a linux machine together so the two can see each other and copy each

other's files?

[ Thread continues here (5 messages/4.49kB) ]

Talkback: Discuss this article with The Answer Gang

Published in Issue 161 of Linux Gazette, April 2009

Gems from the Mailbag

This month's answers created by:

[ Ben Okopnik, Kapil Hari Paranjape, Rick Moen ]

...and you, our readers!

Editor's Note

Kapil's brilliant analogy on approaching Linux (and other unfamiliar

technologies) drew instant kudos from The Answer Gang this month. It

further inspired the creation of a new section of the Mail Bag, "LG Gems",

as a showcase, to highlight this sort of thing, and in hopes of finding

more "gems" - especially those explaining Linux and open source

culture.

I expect this to be an occasional feature in LG, and will be basing it,

as with the inaugural piece, on peer acclaim. Do look around in the Mailbag

archives to see if there's hidden treasure back there, and let me know!

Kat Tanaka Okopnik

Mailbag Editor

Bus and Taxi analogy (Was Cut and paste between two computers. Was linking my sound card to xoscpe)

Kapil Hari Paranjape [kapil at imsc.res.in]

Tue, 31 Mar 2009 11:05:26 +0530

Hello,

On Mon, 30 Mar 2009, 2elnav@netbistro.com wrote:

> Don't know how to do that in Linux. Can't seem to figure it out.

>

> Judging by how my query on siggen is being handled I despair of ever

> figuring out these other issues. Maybe I should stick to Windows.

> I am just getting more confused by all the jargon.

Here is an analogy that may help you understand the distinction.

A man who has only ever ridden in a taxi decides to take a bus one

day. The bus stops and he gets in along with the other people waiting

at the stop.

He starts to occupy a seat when the driver (or in India the

conductor) asks him to buy a ticket before getting in. He is

annoyed: "I have to pay before I get to my destination?"

However, he agrees as tries to pay $50 -- which is refused. Other

passengers try to help him and suggest that he use a smaller

amount. Somehow he manages to find some small change and pay

his fare.

He then tells the bus driver he wants to go to the airport. The people

in the bus tell him he got into the wrong bus and that he should get

off this bus at the next stop and get into a different one. Now he is

quite annoyed and has started yelling at people saying that they are

making him really confused. He tells them that he has taken Shuttle

services (which are a shared transport service) and even there he has

never been made to do things so differently from a simple taxi ride.

...

There are many ways this story could end:

1. One kind old lady says she is going to somewhere near the airport

and will get off with him at the next stop and get him on the right

bus. The guy calms down and agrees.

2. The guy finds a booklet in the bus that explains the way the bus

system operates. He is fascinated and reads it all the way through.

Of course, as he is engrossed in his reading he then reaches the last

stop of the bus he is on but by then he knows the system well enough

that he can get to the airport from there by bus.

3. The guy yells and screams at everybody and gets off at the next

stop and vows to always only travel by taxi ever again.

Regards,

Kapil.

--

[ Thread continues here (6 messages/5.94kB) ]

Talkback: Discuss this article with The Answer Gang

Published in Issue 161 of Linux Gazette, April 2009

Talkback

Talkback:140/kapil.html

[ In reference to "Setting up an Encrypted Debian System" in LG#140 ]

Marius Pana [marius.pana at gmail.com]

Sat, 28 Mar 2009 11:12:10 +0200

There seems to be issues with the cpio (copy command) as it will copy /prov

over! for example /proc has 0 disk space used in my / root filesystem. In

/tmp/target it now has 4.8GB?! and the cpio operation fails with a no space

on device error. I am about to try and change the option to cpio / find and

see if I cant get it to work.

Regards,

Marius

[ Thread continues here (3 messages/3.29kB) ]

Talkback:135/knaggs.html

[ In reference to "Nomachine NX server" in LG#135 ]

Dave Kennedy [davek1802 at gmail.com]

Sun, 1 Mar 2009 15:17:10 -0800

Hi,

Good article.

I have a problem which I hope you can help me with.

Env:

Nomachine Nxclient for Windows 3.3.0-6

CENTOS 4.7 i686 on standard

nx-3.2.0-8.el4.centos.i386.rpm

freenx-0.7.3-1.el4.centos.i386.rpm

If I login remotely as root the gnome desktop is displayed OK

but login as another user the !M splash screen is displayed

and then closes with no gnome desktop.

How can I verify that gnome is 'enabled' for the user?

Thanks

[ Thread continues here (2 messages/2.73kB) ]

Talkback:160/lg_bytes.html

[ In reference to "News Bytes" in LG#160 ]

Deividson Okopnik [deivid.okop at gmail.com]

Thu, 5 Mar 2009 00:33:03 -0300

[ Wait, wait... this is like a repeat nightmare. Isn't there a

standard story about how the Chevrolet Nova didn't sell well in Mexico

because 'no va' in Spanish means 'no go'??? Only this time, it's not

clueless American GM executives deciding on the name... -- Ben ]

Nova also means new in some spanish based languages (including portuguese)

[ Thread continues here (3 messages/2.55kB) ]

Talkback:160/okopnik.html

[ In reference to "The Unbearable Lightness of Desktops: IceWM and idesk" in LG#160 ]

Ben Okopnik [ben at linuxgazette.net]

Thu, 5 Mar 2009 10:09:47 -0500

I just realized that I forgot one either minor or major thing in this

article, depending on how you look at it: how to actually auto-run

'idesk' under IceWM.

Since Ubuntu does its own thing with startup files, adding things to

~/.xinitrc or ~/.xsession won't do anything useful. However, IceWM

itself supports an init file mechanism of its own: if you place a file

called 'startup' into your ~/.icewm directory and make it executable, it

will be run when you start IceWM. Mine consists of nothing more than

/usr/bin/idesk &

--

* Ben Okopnik * Editor-in-Chief, Linux Gazette * https://LinuxGazette.NET *

[ Thread continues here (10 messages/11.24kB) ]

Talkback: Discuss this article with The Answer Gang

Published in Issue 161 of Linux Gazette, April 2009

2-Cent Tips

2-cent Tip: Screenshots without X

Kapil Hari Paranjape [kapil at imsc.res.in]

Sat, 21 Mar 2009 07:50:04 +0530

Hello,

I had to do this to debug a program so I thought I'd share it.

X window dump without X

How does one take a screenshot without X? (For example, from the text

console)

Use Xvfb (the X server that runs on a virtual frame buffer).

Steps:

1. Run Xvfb

$ Xvfb

This will usually start the X server :99

$ DISPLAY=:99 ; export DISPLAY

2. Run your application in the appropriate state.

$ firefox https://www.linuxgazette.net &

3. Find out which window id corresponds to your application

$ xwininfo -name 'firefox-bin' | grep id

Or

$ xlsclients

Use the hex string that you get as window id in the commands

below

4. Dump the screen shot of that window

$ xwd -id 'hexid" > firefox.xwd

5. If you want to, then kill these applications along with the

X server

$ killall Xvfb

'firefox.xwd' is the screenshot you wanted. Use 'convert' or on of

the netpbm tools to convert the 'xwd' format to 'png' or whatever.

Additional Notes:

A. You can use a different screenshot program.

B. If you need to manipulate the window from the command line, then

programmes like 'xautomation' and/or 'xwit' are your friends.

Alternatively, use a WM like "fvwm" or "xmonad":

DISPLAY=:99 xmonad &

This will allow you to manipulate windows from the command line if

you know some Haskell!

Regards,

Kapil.

--

[ Thread continues here (3 messages/3.04kB) ]

2-cent Tip: Lists of files by extension

Ben Okopnik [ben at linuxgazette.net]

Sat, 21 Mar 2009 15:36:49 -0400

Recently, I decided to sort, organize, and generally clean up my rather

extensive music collection, and as a part of this, I decided to

"flatten" the number of file types that were represented in it. Over the

years, just about every type of audio file had made its way into it:

FLAC, M4A, WMA, WAV, MID, APE, and so on, and so on. In fact, the first

step would be to classify all these various types, get a list of each,

and decide how to convert them to MP3s (see my next tip, which describes

a generalized script to do just that.)

The process of collecting this kind of info wasn't unfamiliar to me; in

fact, I'd previously done this, or something like it, with the "find"

command when I was trying to establish what kind of files I'd want to

index in a search database. This time, however, I took a bit of extra

care to deal with names containing spaces, non-English characters, and

files with no extensions. I also defined a list of files that I wanted

to ignore (see the "User-modified vars" section of the script) and

provided the option of specifying the directory to index (current one by

default) and the directory in which to create the 'ext' files

(/tmp/files<random_string>) by default; the script notifies you of the

name.)

This isn't something that comes up often, but it can be very useful in

certain situations.

#!/bin/bash

# Created by Ben Okopnik on Thu Mar 12 11:54:02 EDT 2009

# Creates a list of files named after all found extensions and containing the associated filenames

[ "$1" = "-h" -o "$1" = "--help" ] && { echo "${0##*/} [dir_to_read] [output_dir]"; exit 0; }

[ -n "$1" -a ! -d "$1" ] && { echo "'$1' is not a valid input directory"; exit 1; }

[ -n "$2" -a ! -d "$2" ] && { echo "'$2' is not a valid output directory"; exit 1; }

################ User-modified vars ########################

dir_root="/tmp/files"

ignore_exts="m3u bak"

################ User-modified vars ########################

snap=`pwd`

[ -n "$1" ] && snap="$1"

[ -n "$2" ] && dir_root="$2"

out_dir=`mktemp -d "${dir_root}XXX"`

echo "The output will be written to the '$out_dir' directory"

cd /

old=$IFS

IFS='

'

[ -n "`/bin/ls $out_dir`" ] && /bin/rm $out_dir/*

for n in `/usr/bin/find "$snap" -type f`

do

ext="`echo ${n/*.}|tr 'A-Z' 'a-z'`"

# Ignore all specified extensions

[ -n "`echo $ignore_exts|/bin/grep -i \"\\<$ext\\>\"`" ] && continue

# No extension means the substitution won't work; no substitution means

# we get the entire path and filename. So, no ext gets spun off to 'none'.

[ -n "`echo $ext|grep '/'`" ] && ext=none

echo $n >> $out_dir/$ext

done

echo "Done."

--

* Ben Okopnik * Editor-in-Chief, Linux Gazette * https://LinuxGazette.NET *

2-cent Tip: Converting from $FOO to MP3

Ben Okopnik [ben at linuxgazette.net]

Wed, 25 Mar 2009 10:03:21 -0400

Recently, while organizing my (very large) music library, I analyzed the

whole thing and found out that I had almost 30 (!) different file types.

Much of this was a variety of info files that came with the music (text,

PDF, MS-docs, etc.) as well as image files in every conceivable format

(which I ended up "flattening" to JPG) - but a large number of these

were music formats of every kind, a sort of a living museum of "Music

Formats Throughout the Ages." I decided to "flatten" all of that as well

by converting all the odd formats to MP3.

Fortunately, there's a wonderful Linux app that will take pretty much

every kind of audio - "mplayer"

(https://www.mplayerhq.hu/DOCS/codecs-status.html#ac). It can also dump

that audio to a single, easily-convertible format (WAV). As a result, I

created a script that uses "mplayer" and "lame" to process a directory

of music files called "2mp3".

It was surprisingly difficult to get everything to work together as it

should, with some odd challenges along the way; for example, redirecting

error output for either of the above programs was rather tricky. The

script processes each file, creates an MP3, and appends to a log called

'2mp3.LOG' in the current directory. It does not delete the original

files - that part is up to you. Enjoy!

#!/bin/bash

# Created by Ben Okopnik on Mon Jul 2 01:16:32 EDT 2007

# Convert various audio files to MP3 format

#

# Copyright (C) 2007 Ben Okopnik <ben@okopnik.com>

# This program is free software; you can redistribute it and/or modify

# it under the terms of the GNU General Public License as published by

# the Free Software Foundation; either version 2 of the License, or

# (at your option) any later version.

#

# This program is distributed in the hope that it will be useful,

# but WITHOUT ANY WARRANTY; without even the implied warranty of

# MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

# GNU General Public License for more details.

########## User-modifiable variables ###########################

set="*{ape,flac,m4a,wma,qt,ra,pcm,dv,aac,mlp,ac3,mpc,ogg}"

########## User-modifiable variables ###########################

# Need to have Bash expand the construct

set=`eval "ls -1 $set" 2>/dev/null`

# Set the IFS to a newline (i.e., ignore spaces and tabs in filenames)

IFS='

'

# Turn off the 'fake filenames' for failed matches

shopt -s nullglob

# Figure out if any of these files are present. 'ls' doesn't work (reports

# '.' for the match when no matching files are present) and neither does

# 'echo [pattern]|wc -w' (fails on filenames with spaces); this strange

# method seems to do just fine.

for f in "$set"; do ((count++)); done

[ -z "$count" ] && { echo "None of '$set' found; exiting."; exit 1; }

# Blow away the previous log, if any

[ ... ]

[ Thread continues here (1 message/4.19kB) ]

Talkback: Discuss this article with The Answer Gang

Published in Issue 161 of Linux Gazette, April 2009

News Bytes

By Deividson Luiz Okopnik and Howard Dyckoff

|

Contents:

|

Selected and Edited by Deividson Okopnik

Please submit your News Bytes items in

plain text; other formats may be rejected without reading.

[You have been warned!] A one- or two-paragraph summary plus a URL has a

much higher chance of being published than an entire press release. Submit

items to bytes@linuxgazette.net.

News in General

IDC: IT Turning to Linux in Economic Downturn

IDC: IT Turning to Linux in Economic Downturn

A recent market survey conducted by IDC (and sponsored by Novell)

reveals a surge in the acquisition of Linux as the worldwide recession

deepens. More than half of the IT executives surveyed will accelerate

Linux adoption in 2009. Specifically, more than 72 percent of

respondents say they are either actively evaluating or have already

decided to increase their adoption of Linux on the server in 2009,

with more than 68 percent making the same claim for the desktop.

The study surveyed more than 300 senior IT executives spanning

manufacturing, financial services, and retail industries across the

globe, as well as government agencies. The number one motivation

executives gave for migrating to Linux was economic and related to

lowering ongoing support costs.

"The feedback gleaned from this market survey confirms our belief

that, as organizations fight to cut costs and find value in this tough

economic climate, Linux adoption will accelerate," said Markus Rex,

general manager and senior vice president for Open Platform Solutions

at Novell. "Companies also told us that strengthening Linux

application support, interoperability, virtualization capabilities and

technical support will all fuel adoption even more."

Additional key survey findings include:

- 67 percent of respondents stated that interoperability and

manageability between Linux and Windows is one of the most important

factors when choosing an operating system.

- The retail industry showed the greatest potential for

acceleration in Linux adoption with 63 percent of respondents planning

an increase on the desktop and 69 percent considering the same on the

server. The government sector lagged.

- Almost 50 percent of respondents plan to accelerate adoption of

Linux on the desktop, especially for basic office functions, technical

workstation users, and higher education/K-12.

- Nearly half of respondents stated that moving to virtualization

is accelerating their adoption of Linux. Eighty-eight percent of

recipients plan to evaluate, deploy or increase their use of

virtualization software within Linux operating systems over the next

12-24 months.

- From a regional perspective, Asia/Pacific is the most bullish on

increasing Linux adoption, as 73 percent of respondents said they

would increase deployments on the server and 70 percent on the

desktop. In the Americas, 66 percent of respondents said they are

either evaluating or have already decided to increase adoption of

Linux on the desktop and 67 percent on the server.

- The economic crisis has had the biggest effect on the Americas,

and in financial services and government. More than 62 percent of

respondents said that their budget has been cut or that they are only

investing where needed.

The research conducted in February 2009 showed 55 percent of

respondents had Linux server operating systems in use, 39 percent had

Unix server operating systems in use, and 97 percent had Windows

server operating systems in use. Respondents were pre-screened via

demographics screeners and completed the survey online. Novell was not

involved in recruiting, and respondents did not need to be Novell

customers.

An IDC white paper summarizing the survey findings can be found at

https://www.novell.com/idc.

Sun Microsystems Unveils Open Cloud Platform

Sun Microsystems Unveils Open Cloud Platform

At its CommunityOne developer event in mid-March, Sun Microsystems

showcased its Sun Open Cloud Platform. Sun previewed plans to launch

the Sun Cloud, its first public cloud service targeted at developers

and startups, and to provide public APIs.

Sun is opening its cloud APIs for public review and comment, so that

others building public and private clouds can easily design them for

compatibility with the Sun Cloud. Sun's Cloud API specifications are

published under the Creative Commons license, which essentially allows

anyone to use them in any way. Developers will be able to deploy

applications to the Sun Cloud immediately, by leveraging pre-packaged

VMIs (virtual machine images) of Sun's open source software,

eliminating the need to download, install and configure infrastructure

software. To participate in the discussion and development of Sun's

Cloud APIs, go to https://sun.com/cloud.

At the core of the Sun Cloud will be the first two services - Sun

Cloud Storage Service and Sun Cloud Compute Service - which will be

available this summer. Customers will be able to take advantage of the

combined benefits of open source and cloud computing via the Sun Cloud

to accelerate the delivery of new applications, reducing overall risk

and quickly scaling compute and storage capacity up and down to meet

demand. Sun is leveraging its extensive portfolio of products,

unparalleled world-class professional services and extensive expertise

in building open communities and partner ecosystems to deliver the Sun

Cloud. Sun will also take the technologies and architectural

blueprints developed for the Sun Cloud and make them available to

customers building their own clouds, ensuring interoperability among

clouds.

Sun is leveraging several technologies to make its Sun Cloud

incredibly easy to use - from deploying applications to provisioning

resources. At the core of the Sun Cloud Compute Service are the

Virtual Data Center (VDC) capabilities acquired in Sun's purchase of

Q-layer in January 2009, which provide everything an individual or

team of developers needs to build and operate a datacenter in the

cloud. The VDC provides a unified, integrated interface to stage an

application running on any operating system within a cloud, including

OpenSolaris, Linux or Windows. It features a drag-and-drop method, in

addition to APIs and a command line interface for provisioning

compute, storage and networking resources via any Web browser. The Sun

Cloud Storage Service supports WebDAV protocols for easy file access

and object store APIs that are compatible with Amazon's S3 APIs.

Sun announced that leading partners and key advocates for cloud

standards are supporting its goal to deliver an open cloud platform. Cloud Foundry, RightScale and Zmanda are three of the many cloud

application providers, cloud management solution providers, Service

Providers and cloud consulting companies partnering with Sun.

Eucalyptus,

an open source infrastructure for implementing cloud computing, is also

supporting Sun's approach to drive standards-based, open source cloud

platforms and applications, enabling users to integrate with other

platforms and services.

To view the CommunityOne event webcast live at 9 am ET, go to https://sun.com/communityone. It will

also be available on-demand.

To register for the Sun Cloud Early Access program, go to https://sun.com/cloud

LPIC-3 Enterprise-level "Security" Exam

LPIC-3 Enterprise-level "Security" Exam

The Linux Professional Institute (LPI),has launched their new

"Security" exam elective for their LPIC-3 certification program

effective March 1, 2009. The LPI-303 "Security" exam is the second

elective available in the organization's enterprise-level LPIC-3

certification program for Linux professionals.

The LPIC-3 certification program consists of a single "Core" exam (LPI

301) which focuses on skills in authentication, troubleshooting, network

integration and capacity planning. This "Core" certification can be

supplemented by existing speciality electives in "Mixed Environments"

(LPI-302) and "Security" (LPI-303). Additional speciality electives are

planned for release in "High Availability and Virtualization", "Web and

Intranet", and "Mail and Messaging". Detailed information on the LPIC-3

program, exam objectives, tasks and sample questions can be found at

https://www.lpi.org/lpic-3.

Eclipse Announces First Release of Swordfish, a Next Generation ESB

Eclipse Announces First Release of Swordfish, a Next Generation ESB

At the EclipseCon conference in April, The Eclipse Foundation

announced the first release of Swordfish, a next-generation enterprise

service bus (ESB) that provides the flexibility and extensibility

required by enterprises to successfully deploy a service-oriented

architecture (SOA) strategy. Swordfish is based on the OSGi standard

and builds upon successful open source projects, including Eclipse

Equinox and Apache ServiceMix.

Swordfish provides the features and extensible framework required by

enterprises and system integrators to customize their ESB to meet the

specific needs of an enterprise. These features include:

- Support for distributed deployment, which results in more

scalable and reliable application deployments by removing a central

coordinating server.

- A runtime Service Registry allows services to be loosely coupled,

making it easier to change and update different parts of a deployed

application. The Registry uses policies to match service consumers and

service providers based on their capabilities and requirements.

- An extensible Monitoring Framework manages events that allow for

detailed tracking of how messages are processed. These events can be

stored for trend analysis and reporting, or integrated into a complex

event processing system (CEP).

- A Remote Configuration Agent makes it possible to configure a

large number of distributed servers from a central configuration

repository without the need to touch individual installed instances.

"We are developing Swordfish to meet the requirements we experienced

deploying large scale SOA applications at Deutsche Post and other

large enterprises," explained Ricco Deutscher, CTO of Sopera and a

member of the Eclipse Runtime Project Management Committee. "Using

Equinox and OSGi, we are able to provide the flexible and extensible

architecture required for SOA deployments to be successful."

"Last year we announced a strategy to provide open source runtime

technology based on Equinox and OSGi," remarked Mike Milinkovich,

Executive Director of the Eclipse Foundation. "The first release of

Swordfish is a great example of the progress that is being made to

develop our runtime technology portfolio. Over the next year I expect

we will see more interesting runtime technology built at Eclipse."

The first release of Swordfish 0.8 will be available for download the

first week of April from https://www.eclipse.org/swordfish/.

Pulsar Mobile Group formed by Eclipse

Pulsar Mobile Group formed by Eclipse

In March, the Eclipse Foundation announced Pulsar, a new industry

initiative to define and create a standard mobile application development

tools platform. The initiative is led by Motorola, Nokia and Genuitec. Other participating members

include IBM, RIM and Sony Ericsson.

Pulsar will support major mobile development environments such as

JavaME, mobile Web technologies, and native mobile platforms.

Instead of requiring mobile developers to use a variety of software

development kits (SDKs) to develop their applications for different

handset manufacturers, Pulsar will define a common set of

Eclipse-based tools in a packaged distribution that will inter-operate

with the various handset SDKs. This will enable developers to stay

within one familiar development environment while creating mobile

applications that target multiple device families.

The Pulsar initiative will focus on four areas:

- The creation of the packaged distribution called Eclipse Pulsar

Platform;

- A technical roadmap to advance the capabilities of the platform;

- A set of best practices which includes documentation and test

suites; and

- Education and outreach to drive adoption of Pulsar with mobile

application developers.

The first release of Pulsar Platform is expected to be available at

the end of June 2009 and will be part of the Eclipse Galileo annual

release.

Open Source AWS Toolkit for Eclipse available now

Open Source AWS Toolkit for Eclipse available now

The AWS Toolkit for Eclipse was announced at a keynote presentation at

EclipseCon 2009. This is a new plugin for Eclipse, targeted for Tomcat

or other application servers running in the Amazon cloud. Support for

Glassfish, JBoss, WebSphere, and WebLogic will be coming.

The AWS Toolkit for Eclipse, based on the Eclipse Web Tools Platform,

guides Java developers through common workflows and automates tool

configuration, such as setting up remote debugger connections and

managing Tomcat containers. The steps to configure Tomcat servers, run

applications on Amazon EC2, and debug the software remotely are now

done seamlessly through the Eclipse IDE.

The new plugin requires Java 1.5 or higher. The Eclipse IDE for Java

Developers 3.4 is recommended. Find more info here:

https://aws.amazon.com/eclipse/

Conferences and Events

- TechTarget Advanced Virtualization Roadshow

-

March - December 2009, various cities

https://go.techtarget.com/r/5861576/5098473

- ESC Silicon Valley 2009 / Embedded Systems

-

March 30 - April 3, San Jose, CA

https://esc-sv09.techinsightsevents.com/

- USENIX HotPar '09 Workshop on Hot Topics in Parallelism

-

March 30 - 31, Claremont Resort, Berkeley, CA

https://usenix.org/events/hotpar09/

- Web 2.0 Expo San Francisco

-

Co-presented by O'Reilly Media and TechWeb

March 31 - April 3, San Francisco, CA

- STPCon Spring

-

March 31 - April 2, San Mateo, CA

- Linux Collaboration Summit 2009

-

April 8 - 10, San Francisco, CA

https://events.linuxfoundation.org/events/collaboration-summit

- Black Hat Europe 2009

-

April 14 - 17, Moevenpick City Center, Amsterdam, NL

https://www.blackhat.com/html/bh-europe-09/bh-eu-09-main.html

- MySQL Conference & Expo

-

April 20 - 23, Santa Clara, CA

https://www.mysqlconf.com/

- RSAConference 2009

-

April 20-24, San Francisco, CA

https://www.rsaconference.com/2009/US/Home.aspx

- USENIX/ACM LEET '09 & NSDI '09

-

The 6th USENIX Symposium on Networked Systems Design & Implementation

(USENIX NSDI '09) will take place April 22–24, 2009, in Boston, MA.

Please join us at The Boston Park Plaza Hotel & Towers for this symposium

covering the most innovative networked systems research, including 32

high-quality papers in areas including trust and privacy, storage, and

content distribution; and a poster session. Don't miss the opportunity to

gather with researchers from across the networking and systems community to

foster cross-disciplinary approaches and address shared research

challenges.

https://www.usenix.org/nsdi09/lg

- IDC Virtualization Forum

-

April 23, Four Seasons Hotel, San Francisco, CA

https://www.idc.com/virtualization-west09

- SOA Summit 2009

-

May 4 - 5, Scottsdale, AZ

https://www.soasummit2009.com/

- RailsConf 2009

-

May 4 - 7, Las Vegas, NV

- STAREAST - Software Testing, Analysis & Review

-

May 4 - 8, Rosen Hotel, Orlando, FL

https://www.sqe.com/go?SE09home

- EMC World 2009

-

May 18, Orlando, FL

https://www.emcworld.com/

- Interop Las Vegas 2009

-

May 19 - 21, Las Vegas, NV

https://www.interop.com/lasvegas/

- SouthEast LinuxFest

-

The SouthEast LinuxFest will hold its first annual conference at Clemson

University on June 13, 2009.

The SouthEast LinuxFest is a community event for anyone who wants to learn

more about Linux and Free & Open Source software. It is part educational

conference, and part social gathering. Like Linux itself, it is shared

with attendees of all skill levels to communicate tips, ideas, and to

benefit all who use Linux/Free and Open Source Software. LinuxFest is the

place to learn, to make new friends, to network with new business

partners, and most importantly, to have fun! It is FREE to attend.

Please see our website for details and speakers.

https://southeastlinuxfest.org/

- Semantic Technology Conference

-

June 14 - 18, Fairmont Hotel, San Jose, CA

- HP Tech Forum 2009

-

June 15 - 18, Las Vegas, NV

https://www.hptechnologyforum.com/

- Velocity Conference 2009

-

June 22 - 24, San Jose, CA

https://conferences.oreillynet.com

- SharePoint TechCon Boston

-

June 22 - 24, Cambridge, MA

- Gartner IT Security Summit 2009

-

June 28 - July 1, Washington, DC

https://www.gartner.com/it/page.jsp?id=749433

- Cisco Live/Networkers 2009

-

June 28 - July 2, San Francisco, CA

https://www.cisco-live.com/

- OSCON 2009

-

July 20 - 24, San Jose, CA

https://en.oreilly.com/oscon2009

Distro News

Red Hat Enterprise Linux 4.8 Beta

Red Hat Enterprise Linux 4.8 Beta

Red Hat is now testing the beta release of RHEL 4.8

(kernel-2.6.9-82.EL) for the Red Hat Enterprise Linux 4 family of

products

Red Hat Enterprise Linux 4.8 is in development and the implemented

features and supported configurations are subject to change before

the release of the final product. The beta CD and DVD images are

intended for testing purposes only. Benchmark and performance results

cannot be published based on this beta release without explicit

approval from Red Hat.

While 'anaconda' upgrade option upgrade from Red Hat Enterprise Linux

4.7 to the Red Hat Enterprise Linux 4.8 beta, there is no guarantee

that the upgrade will preserve all of a system's settings, services,

and custom configurations. For this reason, Red Hat recommends a fresh

installation rather than an upgrade. Also note that upgrading from

beta release to the GA product is not supported.

Red Hat is moving GCC4 from Tech Preview to supported but notes that

GCC4 in Enterprise Linux 4 is not fully ABI compatible with Red Hat

Enterprise Linux 5. Applications compiled on the older version,

Enterprise Linux 4, are expected to continue to work on the newer

version 5 as long as they use libraries that are also supported on

Enterprise Linux 5 (either directly or via compatibility libraries).

RHEL 4.8 is available to existing Red Hat Enterprise Linux subscribers

via RHN. Installable binary and source ISO images are available via Red

Hat Network at: https://rhn.redhat.com/network/software/download_isos_full.pxt.

Pre-Orders OpenBSD 4.5 Available Now

Pre-Orders OpenBSD 4.5 Available Now

The OpenBSD project's upcoming release, version 4.5, is now available

as a pre-order ($50.00 + shipping). Scheduled for May 2009, OpenBSD

4.5 will ship with a large number of new features and broad hardware

support, including x86, Sparc, ARM and PowerPC CPUs.

Among the software inclusions are:

- Gnome 2.24.3.

- GNUstep 1.18.0.

- KDE 3.5.10.

- Mozilla Firefox 3.0.6.

- Mozilla Thunderbird 2.0.0.19.

- MySQL 5.0.77.

- OpenOffice.org 2.4.2 and 3.0.1.

- PostgreSQL 8.3.6.

For more information please see the OpenBSD 4.5 features page, here:

https://openbsd.org/45.html.

Software and Product News

Oracle Middleware Pack for Eclipse Now Available

Oracle Middleware Pack for Eclipse Now Available

During EclipseCon, Oracle announced it is providing Java developers

with new tools, including the Oracle Enterprise Pack for Eclipse, a

free component of Oracle Fusion Middleware. Included in the Enterprise

Pack is an Oracle WebLogic Server Plug-in, Object-Relational Mapping

(ORM) tools, and Spring and Web Service tools to reduce development

complexity for Java and database applications.

In addition to the WebLogic Server Plug-in, Oracle Enterprise Pack for

Eclipse Release 11g adds new features, including:

- The ability for Eclipse developers to configure, develop and

test JAX-WS and JAXB services in Java with EJB3 and XML integration.

- Object-Relational Mapping (ORM) Workbench for Java Persistence

API (JPA), which makes work with relational data in Java easy. The

Workbench can generate and edit JPA entities, annotate existing

classes, work with relationships and map datatypes.

- EclipseLink support - the ORM Workbench supports EclipseLink and

other JPA providers.

- Spring IDE Project and Spring code generation wizards to easily

work with Spring Beans and generate Spring DAO from existing JPA

Entities.

- Editors and Wizards for common WebLogic Server descriptors to

decrease the effort developers spend making configuration changes.

- Oracle Database Tools, making it easier to work with relational

data in Java.

An Eclipse Foundation Board Member, Oracle has a long history of

participation in the Eclipse community. Oracle currently leads several

Eclipse-based projects including JavaServer Faces (JSF) Tools,

Dali JPA Tooling, Eclipse Data Tools Platform, and EclipseLink

(derived from Oracle TopLink).

"It is great to see Oracle expanding on its Eclipse tools strategy and

further contributing to the community," said Mike Milinkovich,

executive director of the Eclipse foundation. "The Oracle Enterprise

Pack for Eclipse 11g release provides a nice complement to the work

they are doing in the Web Tools Platform Projects, which includes the

Dali project, the JSF tools project, the Java EE tools project, and

the EclipseLink project."

For more info, visit:

* https://blogs.oracle.com/devtools/

* https://java-persistence.blogspot.com/

* https://blogs.oracle.com/gstachni/

Linpus Shows Instant-on Netbook At CeBIT

Linpus Shows Instant-on Netbook At CeBIT

Linpus Technologies, a leader in the field of Linux solutions for low

cost notebooks, netbooks and nettops, announced its entry into the fast

boot product market with a sneak preview of the new version of its

flagship product, Linpus Linux Lite.

To achieve fast boot-up and launch of applications for Linpus QuickOS,

Linpus engineers set out to leverage their expertise in fine-tuning

and maximizing software performance for less powerful hardware

platforms in the netbook market. With QuickOS they redefined

functionality for the product and striped away unnecessary libraries.

Also included is a customized virtual engine to read, edit and save

Windows files and also run popular multimedia, productivity, and

gaming software while running Linpus Linux Lite.

"The netbook market requires operating system solutions that are rich,

powerful, yet lightweight and fast," said Warren Coles, the marketing

director for Linpus."Our work on the Acer Aspire One and since with

the Moblin project taught us that much could be done at the software

level to decrease boot-up time."

Itemis Extends Eclipse EMF Modeling Capability

Itemis Extends Eclipse EMF Modeling Capability

Itemis is releasing the new development of TMF-Xtext for inclusion in

the next version of Eclipse in June 2009, and piloting the new

EMF-Index project. Both of the projects were discussed in sessions at

EclipseCon 2009: "Next generation textual DSLs with Xtext" and

"Managing Big Ecore Models with EMF Index".

TMF-Xtext which will be released in the next version of Eclipse in

June 2009. With Xtext, very simple so-called domain specific languages

(DSLs) can be created. This open-source framework is part of the

Eclipse Modeling Project and is being further developed by the itemis

employees in the Textual Modeling Framework (TMF).

Itemis also is woking on the new Eclipse project, EMF-Index, for the

creation of scalable modeling. The EMF-Index is a key element for the

use of a large number of models in a working environment and enables a

quick search for model elements.

For more info, go to: https://www.itemis.com

Instantiations Releases WindowBuilder Pro v7.0 for Eclipse

Instantiations Releases WindowBuilder Pro v7.0 for Eclipse

Instantiations, has released version 7.0 of its market-leading

WindowBuilder Pro Java graphical user-interface (GUI) builder.

WindowBuilder Pro includes powerful functionality for creating user

interfaces based on the popular Swing, SWT (Standard Widget Toolkit),

and GWT (Google Web Toolkit) UI frameworks. This product won an

Eclipse Technology Award at EclipseCon in March for Best Commercial

Eclipse-Based Developer Tool.

WindowBuilder Pro is a bi-directional Eclipse GUI builder with

drag-and-drop functionality and automatic Java code generation. The

product includes a visual design editor, wizards, intelligent layout

assistants, localization and more. WindowBuilder Pro component

products include Swing Designer, SWT Designer, and GWT Designer.

"It has been impressive to see the continued growth and popularity of

WindowBuilder Pro," said Mike Milinkovich, executive director of the

Eclipse Foundation. "Instantiations continues to deliver high quality,

innovative tools for the Eclipse platform that help developers utilize

Eclipse more effectively, and we're pleased with their continued

support of Eclipse."

Updates in v7.0 include UI Factories, a convenient way to create

customized, reusable versions of common components, improved parsing

using binary execution flow, a new customization API for third party

extensibility, Eclipse Nebula widgets integration (SWT), Swing Data

Binding, JSR 295 (Swing), and full support for GWT-Ext widgets and

layouts (GWT).

WindowBuilder Pro v7.0 is available for $329 USD with a traditional

software license that includes 90 days of upgrades, maintenance and

technical support. Product upgrades are available at no cost to

customers with current support agreements. Download full-feature trial

evaluation software from

https://www.instantiations.com/prods/docs/download.html.

Instantiations is a founding member of the Eclipse Foundation and the

Smalltalk Industry Council. The company is also a major contributor

in the Smalltalk language market with its VA Smalltalk.

Talkback: Discuss this article with The Answer Gang

![[BIO]](../gx/authors/dokopnik.jpg)

Deividson was born in União da Vitória, PR, Brazil, on

14/04/1984. He became interested in computing when he was still a kid,

and started to code when he was 12 years old. He is a graduate in

Information Systems and is finishing his specialization in Networks and

Web Development. He codes in several languages, including C/C++/C#, PHP,

Visual Basic, Object Pascal and others.

Deividson works in Porto União's Town Hall as a Computer

Technician, and specializes in Web and Desktop system development, and

Database/Network Maintenance.

Howard Dyckoff is a long term IT professional with primary experience at

Fortune 100 and 200 firms. Before his IT career, he worked for Aviation

Week and Space Technology magazine and before that used to edit SkyCom, a

newsletter for astronomers and rocketeers. He hails from the Republic of

Brooklyn [and Polytechnic Institute] and now, after several trips to

Himalayan mountain tops, resides in the SF Bay Area with a large book

collection and several pet rocks.

Howard maintains the Technology-Events blog at

blogspot.com from which he contributes the Events listing for Linux

Gazette. Visit the blog to preview some of the next month's NewsBytes

Events.

Upgrading your Slug

By Silas Brown

If you installed Debian on an NSLU2 device ("Slug") following Kapil Hari Paranjape's instructions in LG

#138, then you might now wish to upgrade from etch (old

stable) to lenny (current stable). Debian itself contains

instructions for doing this, which you can follow if you like (but see the

note below about the locales package causing a crash). If you use the

NSLU2's watchdog driver, then I recommend first booting without it,

otherwise the general unresponsiveness caused by the upgrade can cause the

watchdog to reboot when the system is unbootable, and you'll have to

restore the filesystem from backup. However, following the standard Debian

dist-upgrade will still leave you running lenny using the

arm architecture. Debian's arm port is now considered

deprecated, and in future releases will be replaced by the armel

port, which has (among other things) significant speed improvements in

floating-point emulation just by changing the handling of the ARM's

registers, stack frames, etc.

If you want to move to armel at the same time as you're upgrading

to lenny, this normally requires re-installing from scratch (since

ArchTakeover is not

implemented, yet.) However, there is also a way to do it incrementally.

Martin Michlmayr has produced an unpacked version of

the lenny armel install, which can be downloaded and untarred into a

subdirectory of your etch system. Etch will not be able to chroot into

this, but at least it lets you compare key configuration files and make

needed changes from the comfort of a working system.

Moving Files and Setting up Configuration

The first thing you need to do is copy fstab from the

old /etc to the new one; you'll also need resolv.conf, hostname,

hosts, mailname, timezone, and adjtime. You might also like to copy

apt/sources.list (change it to "lenny" or "stable", if it says "etch"), and

the following files if you have customised them: inittab, inetd.conf,

logrotate.conf. For the password files (i.e., passwd, shadow, group,

and gshadow - you don't have to worry about any backup versions ending in

-), it is best not to simply copy them from etch, because that can

delete the new accounts that various lenny base packages use. Instead, you

can use diff -u etc/passwd /etc/passwd | grep \+, pick out the

real user accounts, and merge them in (and do the same with group,

shadow, gshadow). To review all changes that the new

distribution will make, do something like diff -ur /etc etc|less

and read through it, looking for what you want to restore. (However, note

that some packages won't be there yet; try searching the list for "Only in

/etc" to see which new config files you might want to copy across in

preparation for them.) Note that lenny uses rsyslog.conf instead of

syslog.conf.

After you are happy with /etc, please remember to copy across the

crontabs in /var/spool/cron. (I've lost count of the number of

times I as a user have had to re-instate my crontab because some admin

forgot to copy the crontabs during an upgrade). Also, take a list of the

useful packages you've installed that you want to re-install on the new

system. Finally, remove (rm -r) the following top-level

directories from the new system (make sure you're in the new system, not in

/!): media, home, root, tmp, lost+found, proc, mnt, sys,

srv. Removing these means they will not overwrite the corresponding

top-level directories from the old system during the next step.

The new system now needs to be copied onto the old one (with the old

directories being kept as backups), and the NSLU2's firmware needs

updating to the lenny version (also downloadable from the above-mentioned

site) using upslug2 from a desktop, as documented. The directories

are best copied over from another system: halt the NSLU2, mount its disk in

another system, and do

cd /new-system

for D in * ; do

mv /old-system/$D /old-system/$D.old && mv $D /old-system

done

substituting /new-system and /old-system appropriately.

(This assumes you have room to keep the *.old top-level directories on the

same partition; modify it, if not.)

Partitioning Complications

Because Martin Michlmayr's downloadable firmware image was (at the time of

writing) generated from a system that assumes /dev/sda2 is the root device,

you should make sure that your root filesystem is on the disk's second

partition. If it is on the first, then you can move and/or shrink it

slightly with gparted, create a small additional partition before

it, and use fdisk to correct the order if necessary. (The fdisk commands

you need are x, f, r, w, and q.) Then, put only that disk

back into the NSLU2, and switch on. If all goes well, you should now boot

into the new distribution. Then, you can start installing packages and

re-compiling local programs, and do apt-get update, apt-get

upgrade and apt-get dist-upgrade. You might still need to

run dpkg-reconfigure tzdata, even though it should show your

correct timezone as the default choice.

Of course, if you have 2 disks connected at boot time, then there's only a

50/50 chance it will choose the correct one to boot from. (If it doesn't,

disconnect and reconnect the power and try again, or boot with only one

disk connected.) If you have set up your /etc/fstab to boot from UUIDs,

then this will take effect when you install your own kernel (which should

happen automatically as you upgrade the lenny packages). You can get a

partition's UUID using dumpe2fs /dev/sda2 | grep UUID, and use

UUID=(this number) in place of /dev/sda2 or whatever in

fstab, as long as it's not a swap partition. One trick for getting

swap to work is to ensure that it is on a partition number that is valid on

only one disk, and then list all the disks having swap partitions

with this number. The correct disk will be used, and the others will cause

harmless errors during boot.

You may experience further complications on account of the differences

between ext2 and ext3 filesystems: In Debian Etch, if you wanted to

reduce the wear on a flash disk, you could tell /etc/fstab to mount the

partition as ext2, even though the installer formatted it as ext3.

Mounting as ext2 simply leaves out writing the ext3 crash-recovery

journal. Apparently, however, the newer kernel in lenny cannot really

mount an ext3 partition as ext2 (it tells you it's doing so, but it

doesn't, see Ubuntu

bug 251999), and moreover, if your fstab says it's ext2, the

update-initramfs utility's omitting the ext3 module from the

kernel will result in an unbootable system when you try to upgrade to

lenny's latest kernel (but you can still boot Martin's kernel, which

expects ext3). Conversely, if your filesystem really is ext2, you won't

be able to boot Martin's kernel. Therefore, you have to:

- Make sure the filesystem is ext3 before booting Martin's kernel

- Make sure the filesystem is ext2 before booting your own kernel, if you

have ext2 in your /etc/fstab

To convert an ext3 partition back to ext2, connect the disk to a separate

computer and, if for example the partition is sdb2 on that

computer, make sure it is unmounted and do

e2fsck -fy /dev/sdb2

tune2fs -O ^has_journal /dev/sdb2

e2fsck -fy /dev/sdb2

and to convert it back to ext3,

tune2fs -O has_journal /dev/sdb2

Martin has filed Debian bug

#519800 to suggest that initramfs support both versions of extfs no

matter what fstab says, which should mean (when fixed) you don't

have to run tune2fs just to get a bootable system. You might still

want to do it anyway to work around the other bug (kernel updating the

journal even when ext2 is requested).

Locales Package

When doing an apt-get upgrade or dist-upgrade, make sure the

locales package is not installed, or at least that you are not

generating any locales with it. That package's new version requires too

much RAM to generate the locales; the 32MB NSLU2 cannot cope, and may

crash. If you need any locales other than C and POSIX, then you can get

them from another Linux system by copying the appropriate subdirectories of

/usr/lib/locale (and possibly /usr/share/i18n if you want

locale -m to work, too).

Sound

If you have fitted a "3D Sound" USB dongle, you might find that, in the new

distribution, the audio becomes choppy and/or echoey. This seems to be on

account of an inappropriate default choice of algorithms in the ALSA

system, and it can be fixed by creating an /etc/asound.conf with

the following contents:

pcm.converter {

type plug

slave {

pcm "hw:0,0"

rate 48000

channels 2

format S16_LE

}

}

pcm.!default converter

Note that this configuration deliberately bypasses the mixer, so only one

sound can play at once. Mixing sounds in real time on an embedded system

like this is likely to be more trouble than it's worth.

Unfortunately, it no longer seems possible to drive the soundcard itself at

lower sample rates and channels, which is a pity because having to

up-convert any lower-samplerate audio (such as the mono 22.05kHz audio

generated by eSpeak) not only wastes bandwidth on the USB bus but also

seems to slightly reduce the sound quality, but the difference is not

immense.

If you are playing MP3s, then you also have the option of getting

madplay (rather than the ALSA system) to do the resampling, and

this could theoretically be better because madplay is aware of the

original MP3 stream, but I for one can't hear the difference.

madplay file.mp3 -A -9 -R 48000 -S -o wav:-|aplay -q -D hw:0,0

On lenny (unlike on etch), recording works, too, and it can be done with

arecord -D hw:0,0 -f S16_LE -r 24000 test.wav, but the quality is

not likely to be good. (Mine had a whine in the background.)

Everything Finished

If you have done this, then you should, with luck, have an NSLU2 running

lenny on the armel architecture, which has significantly faster

floating-point emulation (although it's not as fast as a real

floating-point processor), and perhaps more important has better

long-term support. (You won't be stuck when arm is dropped in

the release after lenny.)

The *.old top-level directories created above can be removed when you are

sure you no longer need to retrieve anything from them, or you can rename

them to "old/bin", "old/usr", etc., and have a chroot environment in

old/. (The new kernel can run an old system in chroot, but not

vice-versa.)

Talkback: Discuss this article with The Answer Gang

![[BIO]](../gx/authors/brownss.jpg)

Silas Brown is a legally blind computer scientist based in Cambridge UK.

He has been using heavily-customised versions of Debian Linux since

1999.

Copyright © 2009, Silas Brown. Released under the

Open Publication License

unless otherwise noted in the body of the article. Linux Gazette is not

produced, sponsored, or endorsed by its prior host, SSC, Inc.

Published in Issue 161 of Linux Gazette, April 2009

Away Mission: 2008 in Review - part 3

By Howard Dyckoff

April is another Mad Month with competing tech events. Besides the

events reviewed here, there are Black Hat Europe 2009, April 14-17, in

Amsterdam, and the USENIX LEET (Large-Scale Exploits and Emergent

Threats) conference in Boston, April 21-24.

This year will feature a new major event - the Linux Collaboration Summit,

organized by the Linux Foundation. The 3rd Annual Collaboration Summit will

be co-located with the CELF Embedded Linux Conference and the Linux Storage

and Filesystem Workshop. It occurs April 8-10 in San Francisco. More

information is here: https://events.linuxfoundation.org/events/collaboration-summit/

Web 2.0 Expo and Velocity

Over the years, the Web 2.0 event has split into the Expo, for Web

production people; and the Web 2.0 Summit, for the leaders (which

operates by invitation only). More recently, the Web 2.0 Expo has

become a forum for social networking and Web designers.

The better bet for sysadmins and Linux hackers is the O'Reilly Velocity